Articles

Increase Performance: Create an Analytics Scorecard for the Right KPIs

- By Robert J Zwerling, P.E., and Lawrence S Maisel, MBA, CPA

- Published: 8/15/2023

When a global cybersecurity company organized a Center of Excellence within its Global Operations Division, it decided to improve its key performance indicators reporting by implementing a reporting model that applied analytics to test the strength and magnitude of business drivers.

The AFP FP&A Case Study series is designed to help you build up key FP&A capabilities and skills by sharing examples of how leading practitioners have tackled challenges in their work and the lessons learned.

Insight: KPIs alone are not sufficient to drive consistent outperformance over time; companies can apply analytics to test the strength and magnitude of the business drivers.

| Company Size: | Large |

| Industry: | Cybersecurity |

| Geography: | Global |

| FP&A Maturity Model: | Performance Management |

Performance Management: Right information to the right person and the right time in the right format. SMART metrics drive business performance.

Background: General Information About the Company

The company is a global manufacturer and service provider of cybersecurity systems and software. It contracts its manufacturing, and its delivery is through third-party distributors and value-added resellers. The company recently organized a Center of Excellence within its Global Operations Division and thought to improve its key performance indicator (KPI) reporting to increase performance. The case authors are consultants brought in to implement analytics-driven KPI reporting.

Challenge: The Work or Difficulty FP&A Had to Address

The company was accustomed to its reporting and reluctant to adopt a new approach. As with any change, there was some resistance as the common sentiment was that it was better to deal with the current challenges than take on a new situation that could be worse.

The original scope of the project was across operations, where receptivity varied by group leader, ranging from hesitation to resistance. A review of the current state of KPI reporting resulted in the following assessment:

- What was called “KPI reporting” at the time was really a collection of functional groups with stovepipe reports of analysis with some metrics.

- Reporting could not be traced or linked to corporate strategy.

- The value of reporting as it related to its impact on strategy was unclear.

- Reporting had limited comparative analysis to budget.

- Reporting had historical values with few forecasts.

- Reporting was without unbiased analytics confirmation as it related to its impact on future performance.

Approach: How FP&A Addressed the Challenge

The consultants recommended creating an Analytics Scorecard™ (ASC) that adds four analytical components to the existing strategy map adding depth to existing measures:

- Quantitatively identify and validate the correlation between the strategic objectives and KPIs.

- Measure the strength and direction of the relationship between objectives and measures.

- Determine the threshold at which the relationship is valid.

- Calculate the impact level of the driver on the metric, e.g., a 5x rise in customer satisfaction above the threshold of 80% produces a 4% decrease in customer churn.

At the outset of the project, the goals of the ASC were established as follows:

- Ensures KPIs and metrics align with strategic objectives.

- Establish KPIs that are S.M.A.R.T, i.e., Specific, Measurable, Achievable, Relevant, and Time-bound.

- Use analytics assessment on each strategic objective to validate that the associated KPI does support its strategic objective.

- Develop the ASC Strategy Map to enable teams to see how their activities relate to each other and integrate with the strategic goals, so they know where they are, where they need to go, why something happened and whether corrective strategies are impactful.

- Engage leadership to define what success looks like for each KPI and communicate that to employees for coherent behavior.

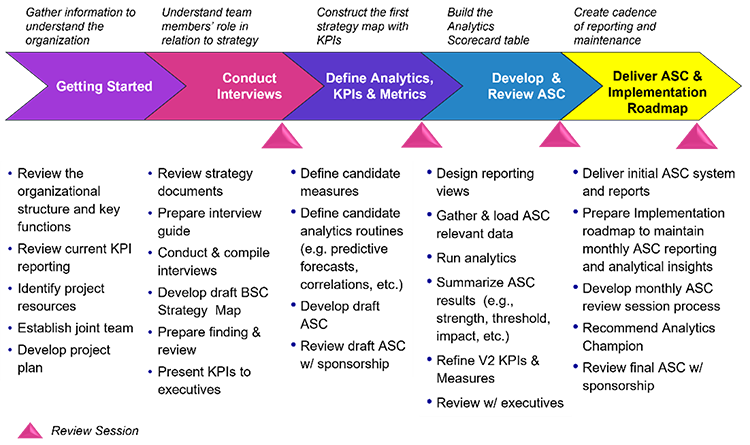

Analytics projects should start small, gain wins, build momentum, then scale. The figure below presents five high-level steps of the ASC project.

During the Develop & Review ASC phase, the ASC table is built. This table integrates the strategic objectives with their KPIs, and each KPI with the associated Measure, Validation Test, Strength & Direction, Threshold and Impact.

A KPI has a measure (a quantitative value), and for that measure, there is a validation test to confirm or refute the KPI’s ability to affect its strategic objective, along with the strength and direction of the KPI to affect its strategic objective. Analytics goes beyond and also tests for the threshold needed for the KPI to affect the strategic direction and under which threshold it does not affect the strategic objective. Finally, analytics calculates the impact by which the KPI will affect its strategic objective once the threshold is attained.

The completed ASC along with the corresponding KPI reporting is delivered in the final Deliver ASC & Implementation Roadmap step.

Outcome: What Came of FP&A's Efforts and What Was Learned

The strategy map enlightened the team on the connection between their roles and the overall corporate strategy. Five corporate strategic principals were each assimilated with a strategic theme, and with each theme, there was a related number of strategic objectives, with each objective having its own KPIs.

Each group then provided data that was loaded into an AI-enabled analytics platform to create analytics models. To complete the ASC table, each measure for each KPI was analyzed for the associated strength and direction, threshold, and impact of the measure on the KPI. A sample of findings is listed below for one strategic theme of customer centricity.

- Customer Growth Dynamics — Correlations: Revealed activities in sales orders and pricing that are weakening the relation between how many units of product are sold and the associated price to the volume of deals that are closed.

- Customer Growth Dynamics — AI Predictive Signal (PS) & AI Probabilistic Forecast (PF): The PS for revenue indicated a weakness in achieving the 2x revenue growth strategic objective. However, the combined PS and PF gave a powerful unbiased look into the future (especially with deep dive into the data) for advanced action that can alter the current trajectory.

- Effective Problem Resolution — Correlations: The fact that problem cases and total RMAs are not correlated with the number of deal closings means there’s good control (at the high level) from good quality products and processes. However, RMAs one level deeper showed a surprisingly weak correlation with the external indicators of consumer sentiment and consumer price index (that may be coincidental but needs investigation).

- Effective Problem Resolution: Detailed analytics identified some products with persistent and systemic issues, even though the products fall within the bands of reliability tolerance.

- Order Processing — Operations Statistics: Powerfully showed the history of operations and predicts when it is about to change. Overall, it has operated largely in its norm, and there was no signal for impending change.

- Touchless Orders — Predictive Signal (PS): Revealed the key distributors and order pushback (i.e., error codes that drive pushbacks). EDI orders and manual orders have almost equal contributions to order pushbacks, but the PS for EDI is decreasing whilst manual is flat. As such, EDI was predicted to improve even as order volume increases but not necessarily so for manual.

The analytical engine produced the KPI reporting inclusive of probabilistic forecasts, predictive signals, and correlations that monitor operations toward the attainment of the strategic objectives. For the first time, Global Operations could visualize its connection with strategic principles and understand the contribution of each group’s actions in Global Operations to achieve those principles.

About the Authors

Maisel and Zwerling explain their ASC methodology more fully in their book AI-Enabled Analytics For Business, published by Wiley (Jesper Sorensen is a third co-author). Maisel is the President of DecisionVu, and Zwerling is the Managing Director of Aurora Predictions.

Copyright © 2024 Association for Financial Professionals, Inc.

All rights reserved.